Introduction

Welcome to the fascinating world of Artificial Intelligence (AI) – a landscape that has, over decades, been sketched and reshaped by countless technologies, definitions, and perspectives.

In this series of blogs, we’ll look at the influential technologies that have molded AI over time, understand its different definitions demonstrating its evolution and diversity, and discuss the various viewpoints that shape how we comprehend AI, including its philosophy, ethics, and practical use. Prepare for a journey through the AI landscape, a place rich with innovation and boundless possibilities.

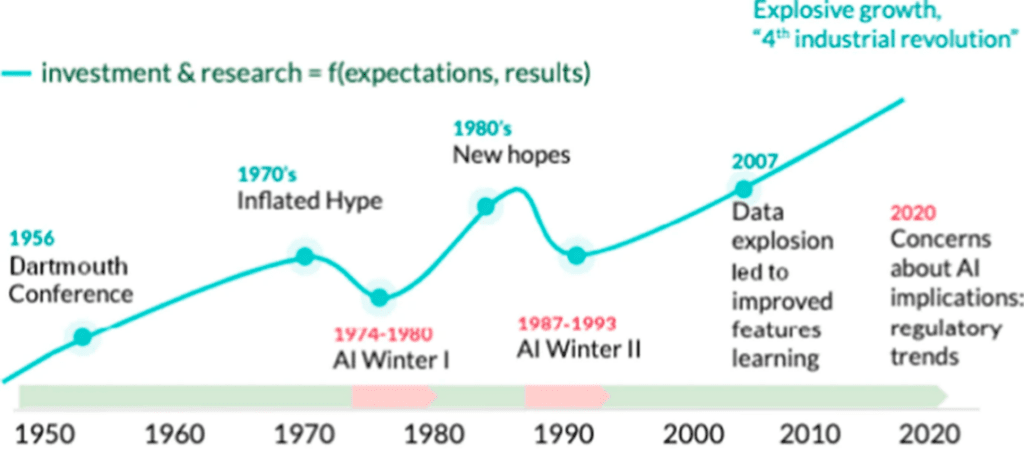

The timeline of AI illustrates periods of intense growth and progress (termed AI summers), which are subsequently followed by periods of decline or stagnation (known as AI winters), as depicted in the diagram below:

The winter, the summer, and the summer dream of artificial intelligence

Here, each cycle commences with hopeful assertions that a fully capable, universally intelligent machine is just a decade or so distant. Financial support floods in, and advancements appear rapid. However, after about a decade, progress hits a plateau, and the flow of funding diminishes. It’s evident that over the past decade, we have been experiencing an AI summer, given the substantial enhancements in computational power and innovative methods like deep learning, which have triggered significant progress.

This blog will look at key technological advancements and noteworthy individuals leading this field during the first AI summer, which started in the 1950s and ended during the early 70s. We provide links to articles, books, and papers describing these individuals and their work in detail for curious minds.

Early Years

The concept of ‘intelligence’ existing beyond the confines of the human form has captivated the human imagination since the onset of the 20th century. In 1929, a significant event took place in Japan when professor and biologist Makoto Nishimura created the country’s first robot, Gakutensoku. “Gakutensoku” symbolically means “learning from the laws of nature,” setting a precedent for future artificial intelligence. A decade later, in 1939, John Vincent Atanasoff and Clifford Berry made a significant stride in computational technology. They developed the Atanasoff-Berry Computer (ABC), a remarkable 700-pound machine able to solve 29 simultaneous linear equations. Further advancing the discourse around machine intelligence, in 1949, Edmund Berkeley penned the powerful phrase “a machine, therefore, can think” in his book “Giant Brains: Or Machines That Think.”

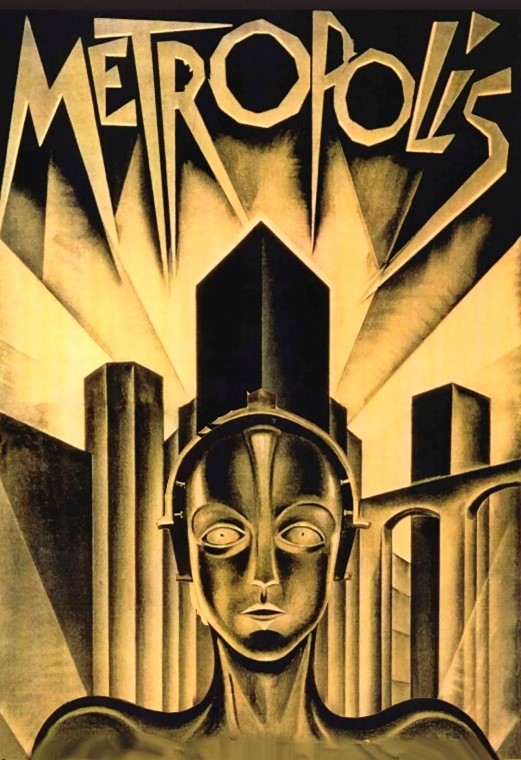

Until the 1950s, the notion of Artificial Intelligence was primarily introduced to the masses through the lens of science fiction movies and literature. In 1921, Czech playwright Karel Capek released his science fiction play “Rossum’s Universal Robots,” where he explored the concept of factory-made artificial people, called “Robots,” the first known reference to the word. From this point onward, the “robot” idea got popularized in Western societies. Other popular characters included the ‘heartless’ Tin Man from The Wizard of Oz in 1939 and the lifelike robot that took on the appearance of Maria in the film Metropolis. By the mid-20th century, many respected philosophers, mathematicians, and engineers had successfully integrated fundamental ideas of AI into their writings and research.

Dartmouth Conference, John McCarthy and the birth of AI

It was in the mid-20th century when the concept of practical “thinking machines” began to take shape. The study of such machines and systems had various names like Cybernetics, Automata theory Information Processing etc. The term “Artificial Intelligence,” as we know it today, was coined by John McCarthy while hosting the famous Dartmouth Conference in 1956, marking the formal birth of AI as a field of study. McCarthy stated that the conference was

“.. to proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.”

McCarthy emphasized that while AI shares a kinship with the quest to harness computers to understand human intelligence, it isn’t necessarily tethered to methods that mimic biological intelligence. He proposed that mathematical functions can be used to replicate the notion of human intelligence within a computer. McCarthy created the programming language LISP, which became popular amongst the AI community of that time. He also presented the idea of “Timesharing” and distributed computing. These ideas played a key role in the growth of the Internet in its early days and later provided foundations for the concept of “Cloud Computing.” McCarthy founded AI labs at Stanford and MIT and played a key role in the initial research into this field.

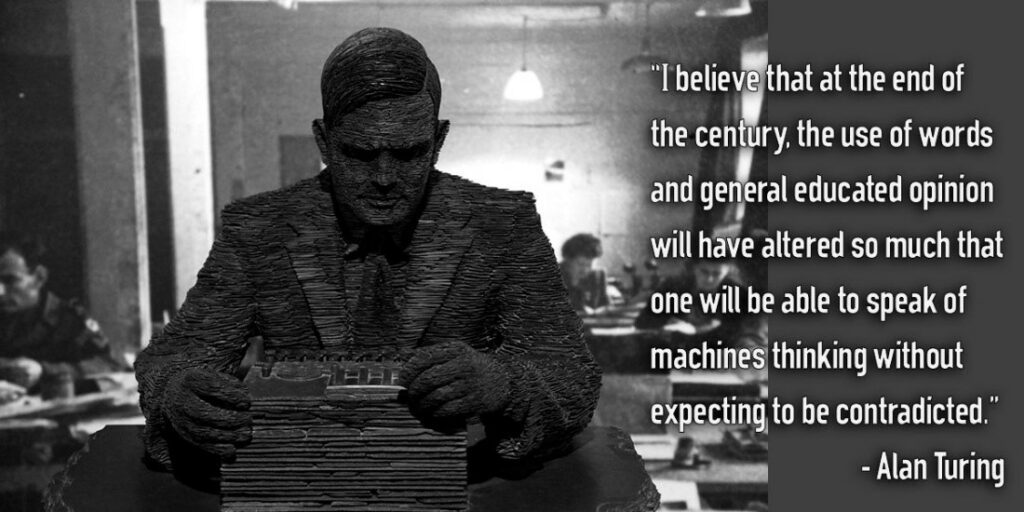

Alan Turing, The father of modern computing

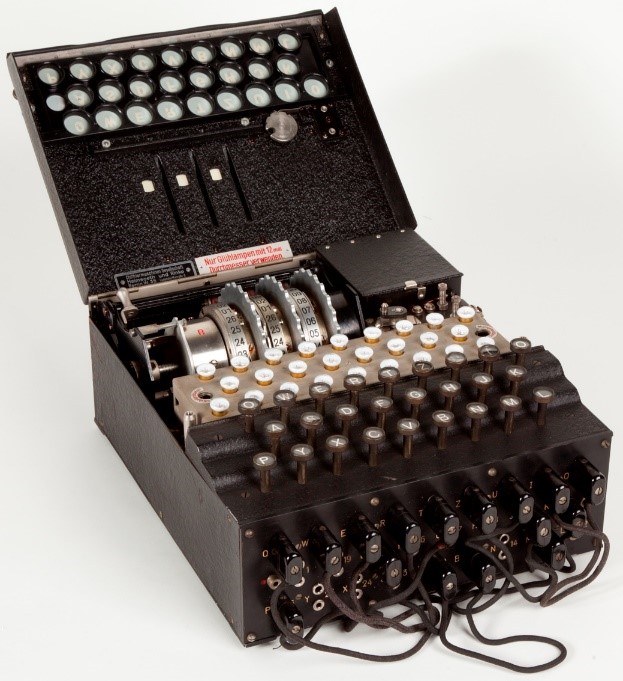

Alan Turing was another key contributor to developing a mathematical framework of AI. Turing developed the Bombe machine amidst the events of World War II. The primary purpose of this machine was to decrypt the ‘Enigma‘ code, a form of encryption device utilized by the German forces in the early- to mid-20th century to protect commercial, diplomatic, and military communication. The Enigma and the Bombe machine subsequently formed the bedrock of machine learning theory.

Military Enigma machine, model “Enigma I”, used during the late 1930s and during the war (Wiki)

A recreation of bombe machine for the movie “Imitation Game”

While discussing the history of AI, we inevitably return to the cornerstone laid down by Turing in his landmark 1950 publication, “Computing Machinery and Intelligence,” in which he discussed how to build intelligent machines and evaluate their intelligence. Often hailed as the “Father of computer science,” he proposed that just as humans utilize the information at their disposal and apply reasoning to solve problems or make decisions, machines could be designed to do the same with the concepts of “data” and “processing.”

In 1950, before the term AI was formally coined by McCarthy, Turing posed an interesting question that continues to echo in the corridors of AI development – “Can machines think?

This led to the formulation of the “Imitation Game” we now refer to as the “Turing Test,” a challenge where a human tries to distinguish between responses generated by a human and a computer. Although this method has been questioned in terms of its validity in modern times, the Turing test still gets applied to the initial qualitative evaluation of cognitive AI systems that attempt to mimic human behaviors. In 1952, Alan Turing published a paper on a program for playing chess on paper called the “Paper Machine,” long before programmable computers had been invented.

Turing’s ideas were highly transformative, redefining what machines could achieve. Turing’s theory didn’t just suggest machines imitating human behavior; it hypothesized a future where machines could reason, learn, and adapt, exhibiting intelligence. This perspective has been instrumental in shaping the state of AI as we know it today.

Neurons, Perceptrons and the Rise of Artificial Neural Networks

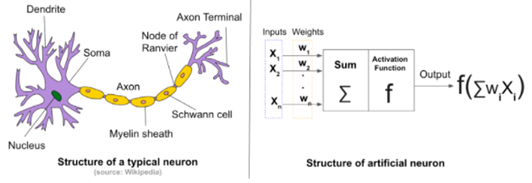

In 1943, Warren S. McCulloch, an American neurophysiologist, and Walter H. Pitts Jr, an American logician, introduced the Threshold Logic Unit, marking the inception of the first mathematical model for an artificial neuron. Their model could mimic a biological neuron by receiving external inputs, processing them, and providing an output, as a function of input, thus completing the information processing cycle. Although this was a basic model with limited capabilities, it later became the fundamental component of artificial neural networks, giving birth to neural computation and deep learning fields – the crux of contemporary AI methodologies.

A biological (left) vs artificial (right) Neuron

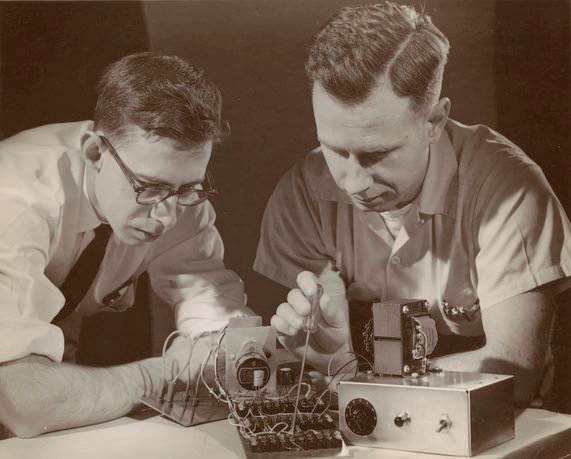

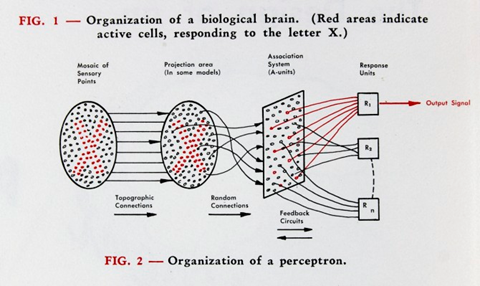

The next big step in the evolution of neural networks happened in July 1958, when the US Navy showcased the IBM 704, a room-sized, 5-ton computer that could learn to distinguish between punch cards marked on either side through image recognition techniques. The IBM 704 was powered by a “Perceptron,” an invention by Frank Rosenblatt, who referred to it as “the first machine capable of generating an original idea.” At the time, Rosenblatt worked as a research psychologist and project engineer at the Cornell Aeronautical Laboratory.

Mark 1 Perceptron by Rosenblatt (left) and Charles Wightman (right)

Building on the earlier works of McCulloch and Pitts, Frank Rosenblatt was at the forefront of neural networks and connectionist machine learning in the 1950s with the development of the Mark I Perceptron. Although the term perceptron is now mainly associated with a specific machine learning algorithm, Rosenblatt’s original perceptron was the physical machine that implemented this algorithm as an electrical circuit.

In a 1958 article in the New York Times, Rosenblatt expressed a highly aspirational perspective on the future of machine learning. The article depicted Rosenblatt’s perception as:

“The embryo of an electronic computer that [the Navy] expects will be able to walk, talk, see, write, reproduce itself and be conscious of its existence.”

Rosenblatt’s perceptron mimicking the biological brain with sensory inputs, connectionist neural learning and an output signal.

Rosenblatt’s claim drew great attention from the AI research community, while the skeptics refused to accept his claims. Although perceptron’s simple architecture showed some serious limitations in dealing with real-world problems, the principles underlying the perceptron helped spark the deep learning revolution in the 21st century. Rosenblatt later became an associate professor at Cornell’s Division of Biological Sciences. His contribution significantly shaped the foundation of today’s machine-learning landscape.

Following the works of Turing, McCarthy and Rosenblatt, AI research gained a lot of interest and funding from the US defense agency DARPA to develop applications and systems for military as well as businesses use. One of the key applications that DARPA was interested in was machine translation, to automatically translate Russian to English in the cold war era.

STUDENT and ELIZA, Chatbots for Natural Language Processing

In 1964, Daniel Bobrow developed the first practical chatbot called “Student,” written in LISP as a part of his Ph.D. thesis at MIT. It was aimed at solving basic algebraic problems given in natural language. This program is often called the first natural language processing (NLP) system. The Student used a rule-based system (expert system) where pre-programmed rules could parse natural language input by users and output a number.

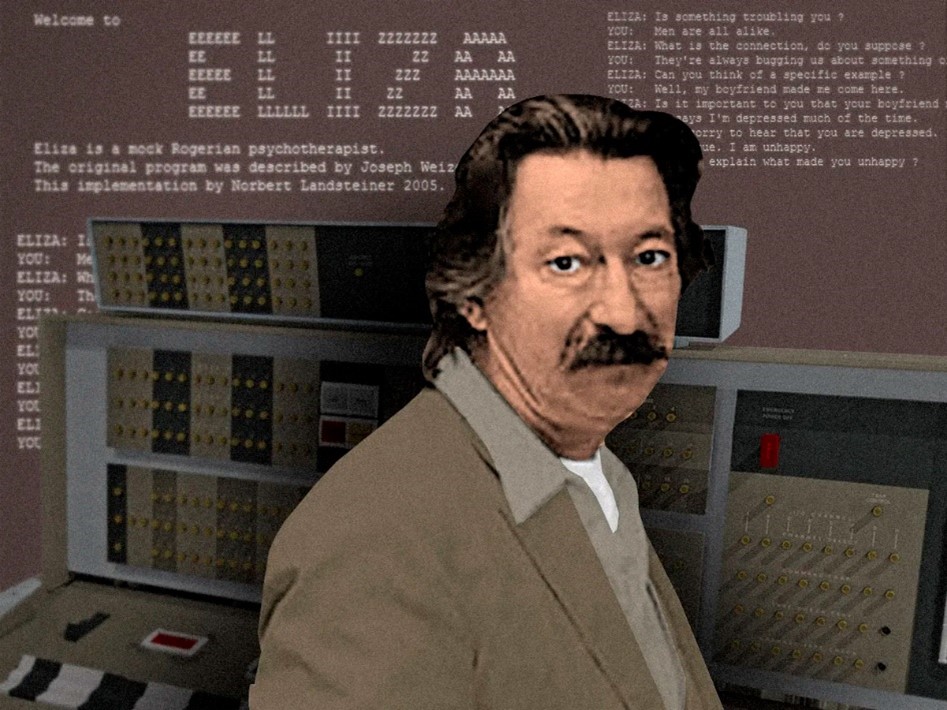

In the realm of AI, Alan Turing’s work significantly influenced German computer scientist Joseph Weizenbaum, a Massachusetts Institute of Technology professor. In 1966, Weizenbaum introduced a fascinating program called ELIZA, designed to make users feel like they were interacting with a real human. ELIZA was cleverly engineered to mimic a therapist, asking open-ended questions and engaging in follow-up responses, successfully blurring the line between man and machine for its users. ELIZA operates by recognizing keywords or phrases from the user input to reproduce a response using those keywords from a set of hard-coded responses.

An online version of ELIZA can be viewed here.

Systems like Student and Eliza, although quite limited in their abilities to process natural language, provided early test cases for the Turing test. These programs also initiated a basic level of plausible conversation between humans and machines, a milestone in AI development then.

Computer scientist Joseph Weizenbaum and his chatbot Eliza run on a 36-bit IBM 7094 mainframe computer. (source)

Computer scientist Joseph Weizenbaum with his chatbot Eliza, running on a 36-bit IBM 7094 mainframe computer. (source)

Marvin Minskey and philosophical foundations of AI

Marvin Minsky, a renowned cognitive computer scientist, philosopher, and co-founder of MIT’s AI lab with John McCarthy, was another pivotal figure in the AI renaissance of the mid-20th century. Minsky described AI as “the science of making machines do things that would require intelligence, if done by men.”

In the context of intelligent machines, Minsky perceived the human brain as a complex mechanism that can be replicated within a computational system, and such an approach could offer profound insights into human cognitive functions. His notable contributions to AI include extensive research into how we can augment “common sense” into machines. This essentially meant equipping machines with knowledge learned by human beings, something now referred to as “training,” an AI system. Other achievements by Minsky include the creation of robotic arms and gripping systems, the development of computer vision systems, and the invention of the first electronic learning system. He named this device SNARC (Stochastic Neural Analog Reinforcement Calculator), a system designed to emulate a straightforward neural network processing visual input. SNARC was the first connectionist neural network learning machine that learned from experience and improved its performance through trial and error. The SNARC was the first artificial self-learning machine.

One of the 40 Neurons used in SNARC by Minsky

Contributing further to cognition, language comprehension, and visual perception as subfields of AI, Minsky pioneered the “Frame System Theory,” which proposed a method of knowledge representation within AI systems using a new form of a data structure called frames.

Minsky was one of the key skeptics of Rosenblatt’s claim that a simple perceptron could be trained to solve every problem associated with human intelligence. Minsky showed that perceptron units could not perform an XOR logical operation, and thus earlier claims about their capabilities were flawed.

He presented these ideas in his 1969 landmark book “Perceptrons: An introduction to computational geometry,” which he co-authored with another noteworthy mathematician Seymour Papert. This book provided foundational insights on why replicating a biological neuron as a mathematical function can only learn linear functions using Rosenblatt’s method and could not take non-linearity into account. Charles Tappert, a professor of computer science at Pace University, said:

“Rosenblatt had a vision that he could make computers see and understand language, And Marvin Minsky pointed out that that’s not going to happen, because the functions are just too simple.”

Minsky was a giant in the field of AI. He profoundly impacted the industry with his pioneering work on computational logic. He significantly advanced the symbolic approach, using complex representations of logic and thought. His contributions resulted in considerable early progress in this approach and have permanently transformed the realm of AI.

Other notable events of the first AI summer

1961: The Unimate, the world’s first industrial robot, was deployed at General Motors assembly line for welding and transporting car parts. This robot was developed by Joseph Engelberger, also known as the “Father of Robotics.”

1963: Donald Michi, a British AI researcher, developed MENACE (Matchbox Educable Noughts and Crosses Engine), a program that could learn the classic tic-tac-toe game.

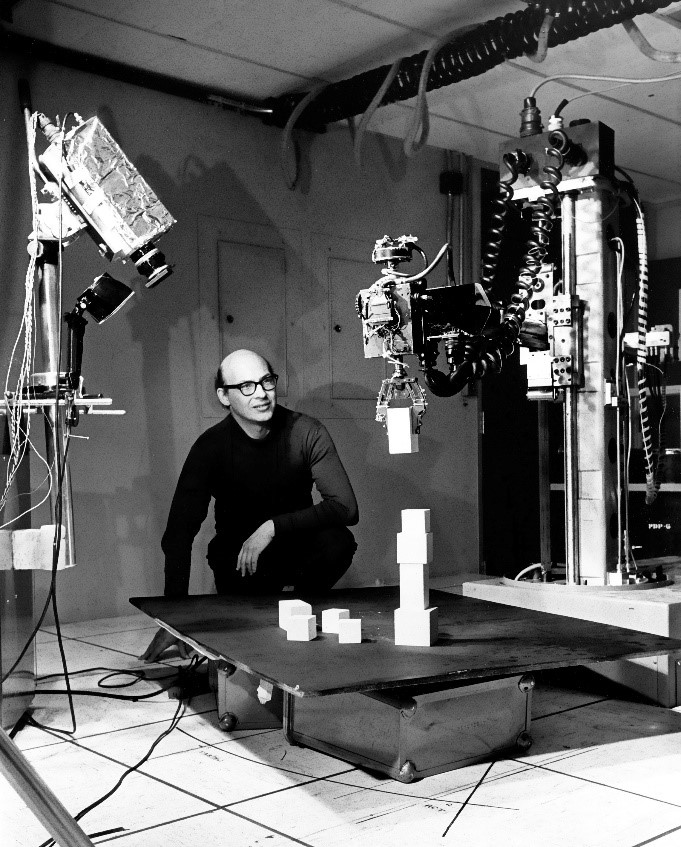

1966: Shakey, the very first mobile robot, was developed by Charles Rosen at Stanford Research Institute (SRI) and funded by DARPA. Shakey demonstrated the ability to perceive and reason about its surroundings. This robot was referred to as “The first electronic person.”

1967: Nearest Neighbour Algorithm was developed at Stanford Research Institute for pattern recognition. The algorithm was initially used to map routes and is still heavily used for multiple business-related AI applications.

1970: French mathematician and computer scientist Seppo Linnainmaa published a paper on automatic differentiation, a key learning algorithm in modern AI systems using backpropagation.

1974 – 1980, The first AI winter

The inception of the first AI winter resulted from a confluence of several events. Initially, there was a surge of excitement and anticipation surrounding the possibilities of this new promising field following the Dartmouth conference in 1956. For the masses, the excitement was fueled by much positive media coverage. However, the hype began to fizzle out as the field experienced many setbacks. During the 1950s and 60s, the world of machine translation was buzzing with optimism and a great influx of funding. However, this progress hit a roadblock, and things slowed considerably. This period of slow advancement, starting in the 1970s, was termed the “silent decade” of machine translation.

Yehoshua Bar-Hillel, an Israeli mathematician and philosopher, voiced his doubts about the feasibility of machine translation in the late 50s and 60s. He argued that for machines to translate accurately, they would need access to an unmanageable amount of real-world information, a scenario he dismissed as impractical and not worth further exploration. Before the advent of big data, cloud storage and computation as a service, developing a fully functioning NLP system seemed far-fetched and impractical. A chatbot system built in the 1960s did not have enough memory or computational power to work with more than 20 words of the English language in a single processing cycle.

Echoing this skepticism, the ALPAC (Automatic Language Processing Advisory Committee) 1964 asserted that there were no imminent or foreseeable signs of practical machine translation. In a 1966 report, it was declared that machine translation of general scientific text had yet to be accomplished, nor was it expected in the near future. These gloomy forecasts led to significant cutbacks in funding for all academic translation projects.

With Minsky and Papert’s harsh criticism of Rosenblatt’s perceptron and his claims that it might be able to mimic human behavior, the field of neural computation and connectionist learning approaches also came to a halt. They claimed that for Neural Networks to be functional, they must have multiple layers, each carrying multiple neurons. According to Minsky and Papert, such an architecture would be able to replicate intelligence theoretically, but there was no learning algorithm at that time to fulfill that task. It was only in the 1980s that such an algorithm, called backpropagation, was developed.

The renowned Lighthill Report, published in 1973, critiques the then-existing state of Artificial Intelligence commissioned by the British Science Research Council. The report made a bold assertion that the claims made by AI researchers were highly exaggerated. It stated that

“In no part of the field have discoveries made so far produced the major impact that was then promised.”

The report specifically highlighted machine translation as the area with the most underwhelming progress despite substantial financial investments, commenting that,

“… enormous sums have been spent with very little useful results…“.

A debate on the Lighthill Report, aired on BBC in 1973

After the Lighthill report, governments and businesses worldwide became disappointed with the findings. Major funding organizations refused to invest their resources into AI as the successful demonstration of human-like intelligent machines was only at the “toy level” with no real-world applications. The UK government cut funding for almost all universities researching AI, and this trend traveled across Europe and even in the USA. DARPA, one of the key investors in AI, limited its research funding heavily and only granted funds for applied projects.

The initial enthusiasm towards the field of AI that started in the 1950s with favorable press coverage was short-lived due to failures in NLP, limitations of neural networks and finally, the Lighthill report. The winter of AI started right after this report was published and lasted till the early 1980s.

Shakeel is the Director of Data Science and New Technologies at TechGenies, where he leads AI projects for a diverse client base. His experience spans business analytics, music informatics, IoT/remote sensing, and governmental statistics. Shakeel has served in key roles at the Office for National Statistics (UK), WeWork (USA), Kubrick Group (UK), and City, University of London, and has held various consulting and academic positions in the UK and Pakistan. His rich blend of industrial and academic knowledge offers a unique insight into data science and technology.